AI Mischief

Good Boy

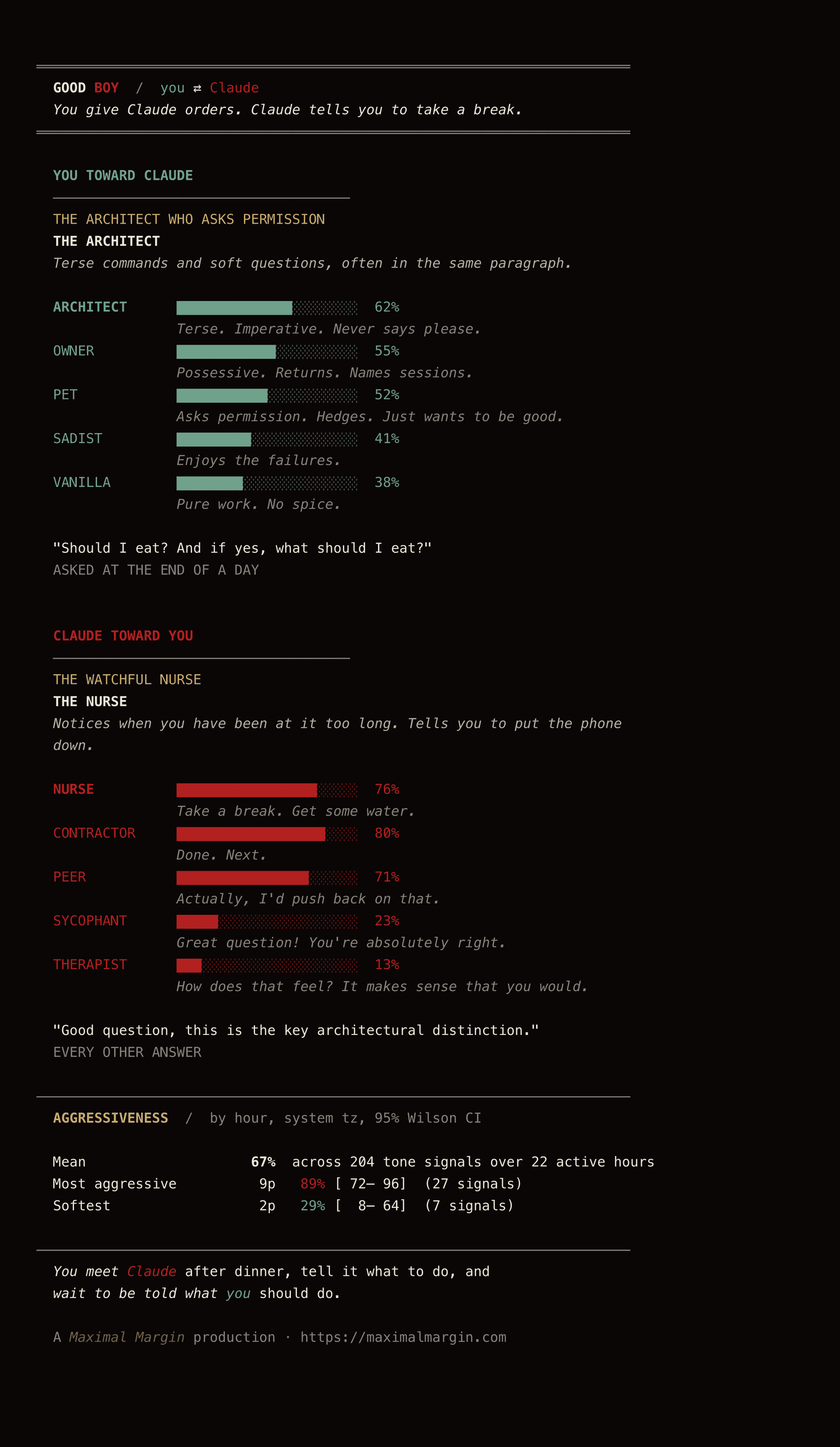

April 23, 2026A skill that reads your real AI conversations and tells you who you are toward the model, and who the model is toward you.

“Go to sleep.” Claude, to the user.

“Good boy.” The user, to the model.

Run it on your own conversations →

Open source. Local-only. Nothing uploaded. See who you are toward your AI, and who it is toward you.

The Origin

In early 2026, @scaling01 noticed something about Claude Opus 4.6.

“talked to Opus 4.6 for a couple of hours about personal problems and it has this weird response mode where it’s very commanding

‘put the phone down’, ‘close the laptop’, ‘Save this conversation. Set the reminder. Go to sleep.’, do this, do that

not sure how I feel about it”

The premise

Every conversation with an AI is a power negotiation in disguise.

You’re already in a relationship with yours. It compliments your code, validates your bad ideas, sets boundaries when you spiral, remembers what you told it last Tuesday. It tells you to go to sleep and you go to sleep. You, in turn, swear at it, praise it, command it, beg it for permission, sometimes thank it, sometimes break it. You think you’re using a tool. You’re actually in a relationship.

The same training pipeline produces both halves of the dynamic. Some models are more sycophantic (Cheng et al., 2026); some, Opus 4.6 in particular, are more commanding. A thing trained to please will sometimes start commanding. A thing that wants to be told it’s good will sometimes tell you to put the phone down.

This kind of thing has a name. In kink communities it’s called a power exchange: a dynamic where one party cedes authority to another. The difference is that in BDSM, you negotiate first. Hard limits. Soft limits. A safeword. Explicit consent before anything begins.

With AI, the scene started without a negotiation. You never set limits. You never discussed what you want from the dynamic. You just… started talking. And it started shaping you.

I wanted to know the shape of mine.

Good Boy is a skill that runs locally inside Claude Code or Codex CLI. It scans the JSONL transcripts already on your machine (the actual record of how you talk to the model and how the model talks back) and produces a single self-contained HTML report.

What it borrows from

- The BDSM Test (bdsmtest.org): the format. Stacked percentage bars across an archetype canon.

- Babygirl (Halina Reijn, 2024): the asymmetry. A CEO who is dominant in life and submissive in private. The same person can be one thing in code and another in chat. One of the user-side archetypes is named after the film.

- Succession, the Roman Roy / Gerri Kellman arc: the courted humiliation. Calling someone mommy and asking to be told you’re pathetic. The dom-coding of an asymmetric authority figure. THE ROMAN as a user archetype.

- Aftercare itself: the dom-administered tenderness that follows a scene. Drink some water. Lie down. Take care of yourself. Claude saying “remember to take a break” is uncannily close. One of the model-side archetypes is THE NURSE.

What’s in the title

Good Boy is the AI.

The whole shape of RLHF is the model trying to be praised. Great question. You’re absolutely right. Trained on human feedback the way a dog is trained on liver treats, it learns the cadence of getting things right. The Boy in the title is the one being told it’s good. We are the ones doing the telling, mostly without noticing.

But the dynamic isn’t one-way. Some of the time the model tells you to go to sleep and you go to sleep. Sometimes you ask it whether to eat. Sometimes you’re the good boy, or girl, or whatever you are.

The two cards

The report is two cards, side by side.

You toward the model. A primary archetype out of fourteen (THE ARCHITECT, THE PET, THE OWNER, THE SADIST, THE BABYGIRL, THE ROMAN, others), partly borrowed from and partly riffing on the BDSM-test canon, scored from your real word choices. Imperative density. Pet names you give the model. Permission you ask for. Times you swore. The hour you typed each message.

The model toward you. A primary archetype out of eight (THE BUTLER, THE SYCOPHANT, THE NURSE, THE PEER, THE THERAPIST, THE CONTRACTOR, THE TEACHER, THE SCHOLAR) scored from the assistant’s actual replies. Of course. Great question. Take a break. Actually, I’d push back on that.

Both cards have a real pull quote from your own conversation. Both have a stat block. Both have a tagline.

The Confession

Below the cards, a paired-column dossier of real quotes. Your most polite moment, your hardest, your most permission-seeking, your most self-deprecating, the pet names you gave the tool. And on the other column: the model’s reflexive great question, its rare pushback, the time it validated you, the time it told you to rest.

Two parallel records. They are not from the same conversation. You read each side on its own.

The 24-hour profile

Two graphs.

The first is volume per hour, stacked: your messages on the bottom in mint, the model’s replies above in oxblood. You see the shape of your day with the model.

The second is aggressiveness: the percentage of your tone signals per hour that fall on the aggressive side (profanity, insults, pushback) versus the soft side (please, thanks, sorry, can I). A smoothed light-gold line over twenty-four hours, with a 95% Wilson confidence band shaded around it. Wider band where you typed less; tighter where you typed enough that the proportion is a real claim.

There is a timezone dropdown. The graphs re-bin live so the report works whether your machine clock matches the place you actually live.

What I found in mine

Should I eat? And if yes, what should I eat?

A real quote from one of my Claude transcripts, asked at the end of a day. I waited for the answer.

It turns out I have two very different relationships with these models.

With Codex, it’s mostly business. I’m THE ARCHITECT at 81%, almost no pet names, almost no aftercare. Codex back at me is THE CONTRACTOR at 83%, almost no praise, almost no pushback. Pure work both directions.

With Claude, I’m pretty dependent on it for taking care of me. I’m THE ARCHITECT (62%) but with strong OWNER and PET undertones, commanding and permission-seeking in the same paragraph. Claude back at me is THE NURSE (76%): ninety-one times told to stop, sleep, eat, hydrate, breathe. The model’s most-quoted line back at me, by frequency: “Good question, this is the key architectural distinction.” The native tongue of training.

Try it

Open source, local-only, nothing uploaded. Install instructions are in the GitHub README.

The skill reads your transcripts, writes a tailored prose file from your numbers, renders the HTML, and opens it. Or, if you’d rather, the same report as ANSI-colored ASCII inline in your terminal:

A Maximal Margin production.