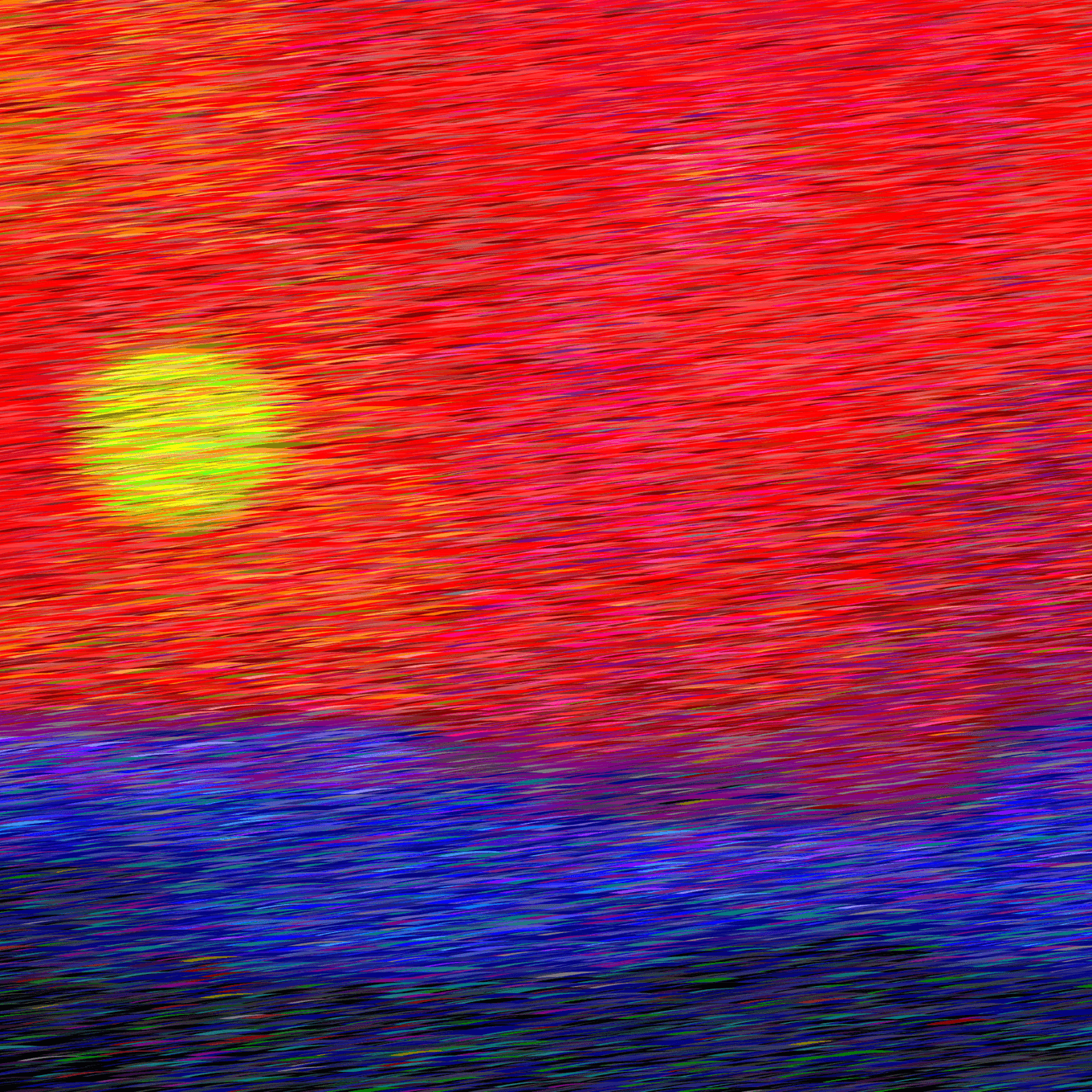

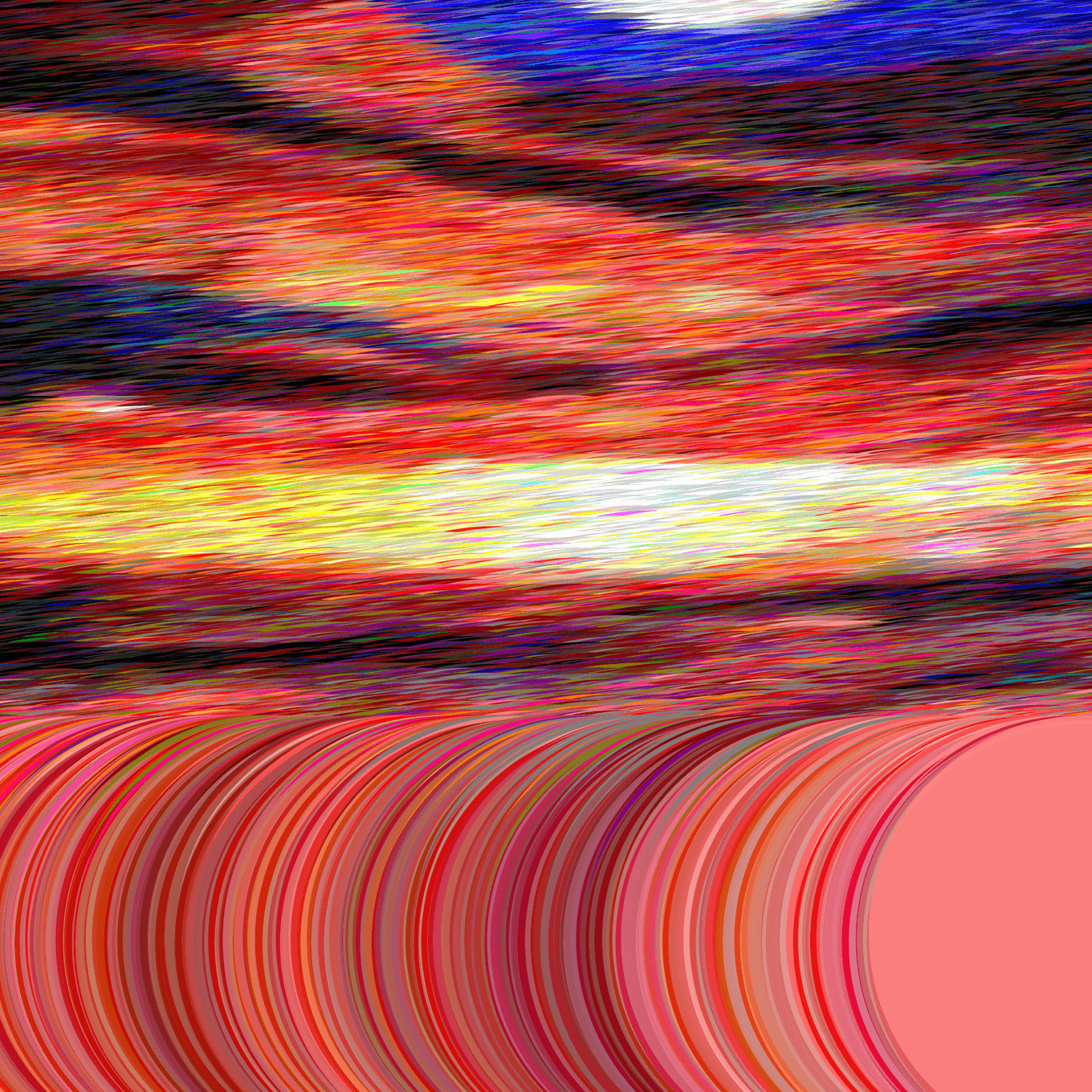

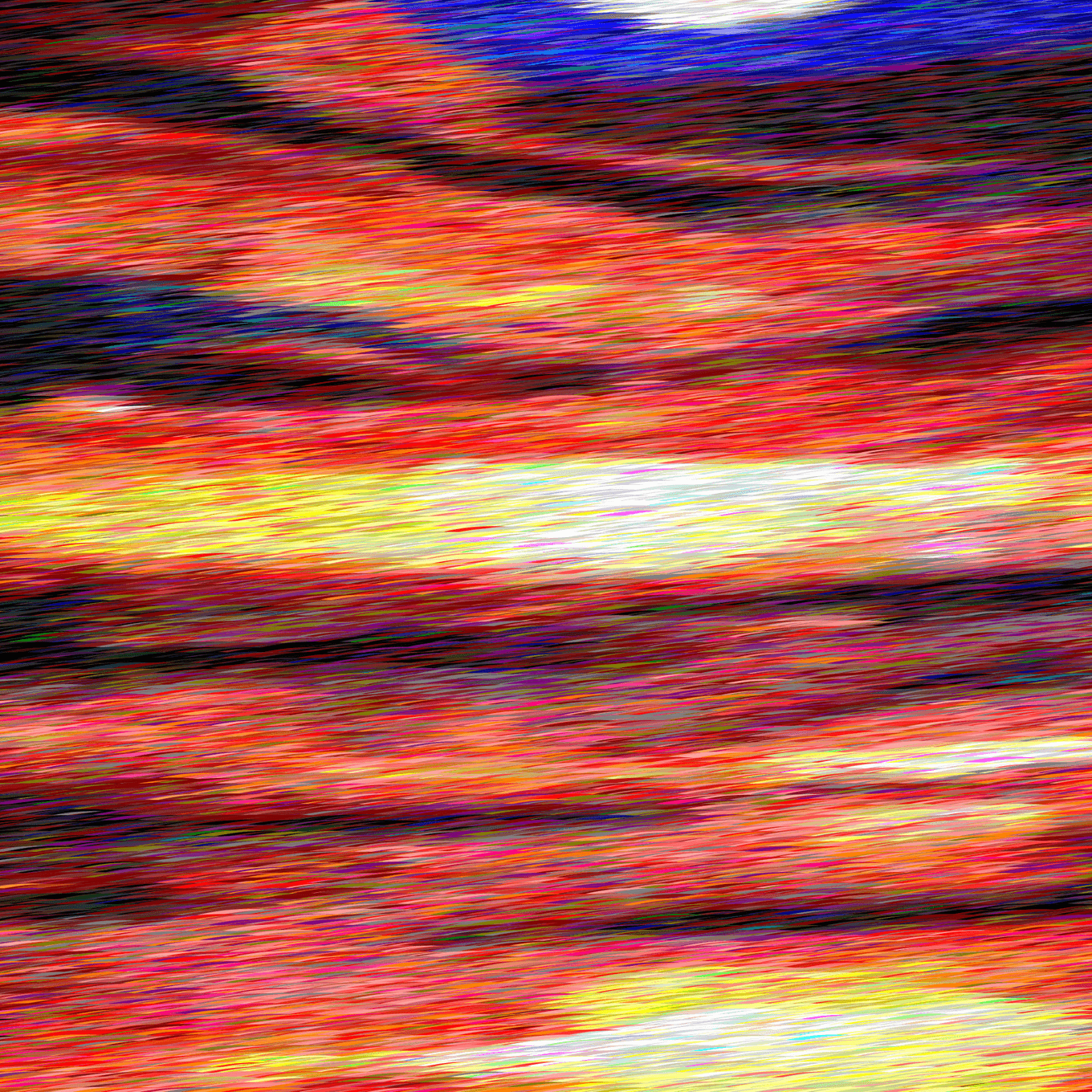

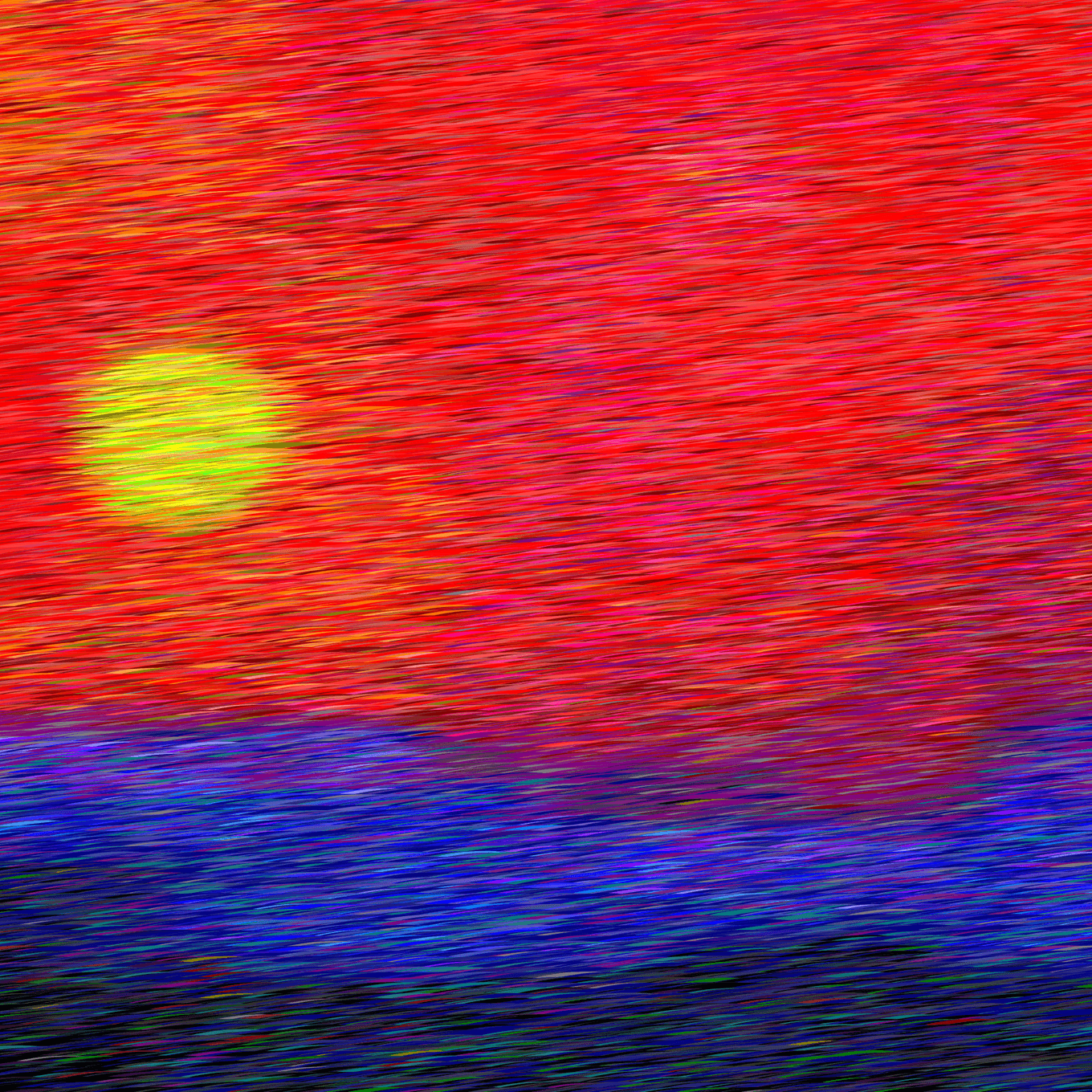

Abstract Sunset

The AI sees the sunset 20 times in a day. One loves the sunset, when one is so sad.

January 05, 2022

Inspiration

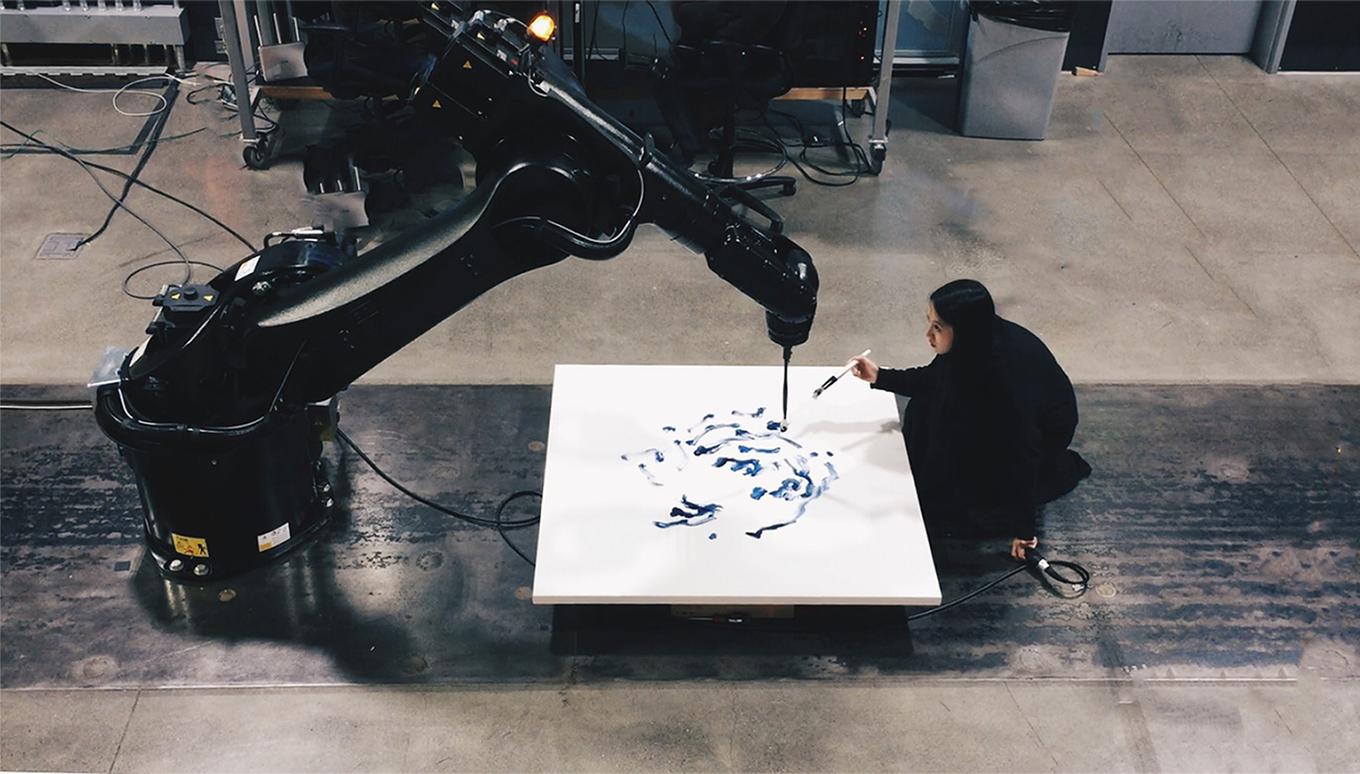

I really love Sougwen’s work Drawing Operations, where the artists collaborates on a drawing with a robotic arm.

Never in history we are so dependent on machines. As Tyler Hobbs argued in the essay, The Importance of Generative Art,

A considerable amount of our lives are now spent in programmed software environments. […] Art must keep up with evolutions in the fabric of society, and coding isn’t just shaping our buildings (although it is doing that), it’s shaping our relationships, our communication, our consumption, our creation, our learning, our memory, our very view of ourselves.

In particular, I am fascinated by how AI shapes our relationships. Think OpenAI’s GPT-3 language model. It can be used to build a wide variety of applications including chat bots, customer service bots, even robotic therapists, which presents unique challenges as well as opportunities.

I also think art should keep up with AI as it evolves. I enjoyed reading this wonderful essay by Pamela Mishkin on the Pudding, Nothing Breaks Like A.I. Heart.

So when I ask an AI to draw up abstract sunsets 20 times, what will it see? And can I collaborate with it to make it more organic?

Technical Notes

OpenAI has this new paper out last month: GLIDE: Towards Photorealistic Image Generation and Editing with Text-Guided Diffusion Models, a 3.5 billion parameter text-to-image generation model, which outperforms its own DALL-E (Salvador Dalí and Pixar’s WALL·E, get it?).

The code is open sourced here: https://github.com/openai/glide-text2im.

I used the code to prompt the model to generate 20 images based on the text, “an abstract painting of a sunset”. Then based on the output, I used the Floyd-Steinberg Dithering technique from The Coding Train to calculate the values to be painted. Finally I tuned the strokes and landed on using giant circles (the edges of these circles to be exact) to paint for a more organic feel.

Some examples of AI-generated v.s. final output: